After some discussion on Aldebaran Robotics Developer program‘s

forum a few of the UK developers decided to meetup in London. We were lucky

enough to be joined by Jerome Monceaux, the architect of the NAO platform and

Akim, the developer program community manager who came over from Paris to

spend the day with us.

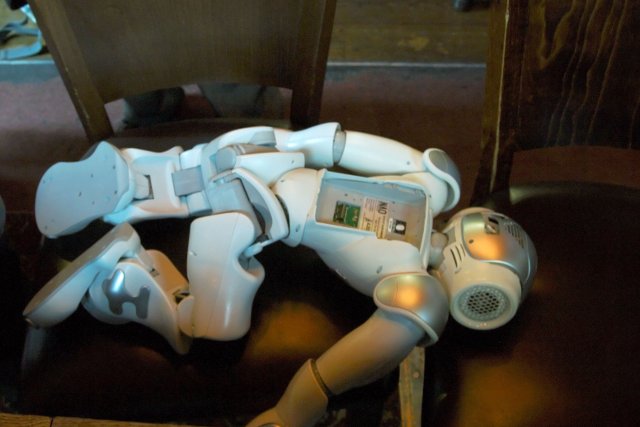

After introducing ourselves, the first order of business was a little surgery

on Tim’s NAO to replace some stripped screws on the battery compartment.

Here’s Tim’s NAO with the battery out - the serial number is written in the

battery compartment - this apparently differs from the body serial number

reported by software.

NAO4

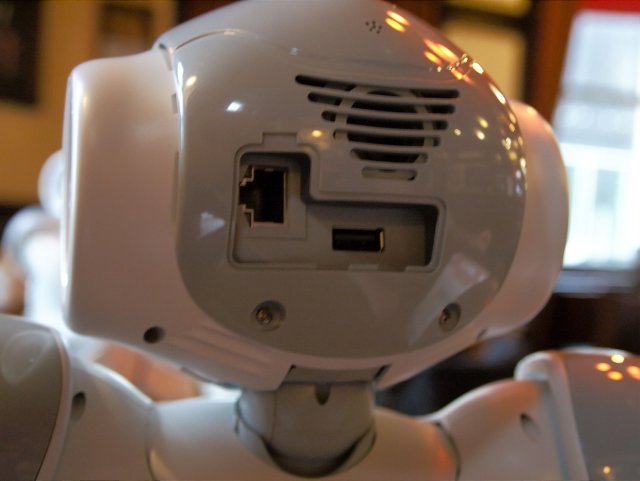

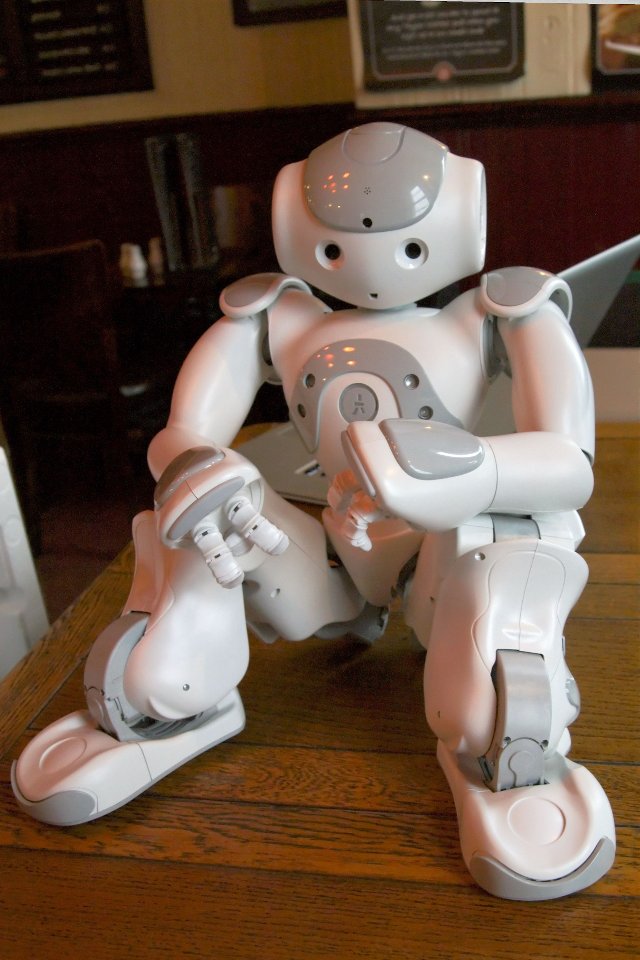

The big surprise of the day was that Akim & Jerome had a brought a NAO4 with

them - naturally it did not stay in its box for too long after we found out.

One visible difference between NAO3 and NAO4 is that NAO4 has a standard USB

type-A socket which can be used for interfacing standard USB equipment such as

a Bluetooth interface (for regulatory reasons Aldebaran are not currently able

to ship NAO with integrated bluetooth) .

Jerome took us through a short presentation on the improvements to the

platform in NAO4. Overall the body is much the same except for an increase in

the PWM frequency used to control the motors. It’s NAO4’s head that contains

most of the interesting changes, including:

- a faster processor (Intel Atom)

- significantly more RAM

- improved cooling system

- better cameras - not only do these have increased resolution and better noise reduction, NAO4 is capable of running both cameras simultaneously (NAO3 can only use one camera at a time).

- Much better speech recognition - The acapela engine has been replaced by Nuance.

- The cameras are more securely attached to the head - in NAO3 it is possible for the cameras to be knocked slightly out of alignment if NAO falls over; this will not happen with NAO4

NAO4 boots noticeably faster than NAO3.

All developers currently on the NAO development programme will be offered an

upgrade to a NAO4 head (keeping the NAO3 body).

NAO store

Jerome gave a demonstration of the NAOstore - it works pretty much how you’d

expect a modern app store to work. There are several cool features though such

as:

- Apps getting automatically downloaded direct to your NAO after adding them via the NAOstore website.

- The ability to set a trigger phrase to cause NAO to run the app

At the moment it’s only possible to trigger apps in response to a spoken

phrase, but in future Aldebaran may add the ability to have other means to

trigger an app. NAO’s life was already a means to run various behaviours on

NAO - it’s now becoming a framework to download and manage applications

downloaded from the NAOstore.

NAO’s life has a concept of channels. A channel is just a way to group

behaviours with automatic updates with contextual triggers - the robot decides

when to trigger the behaviour. at the moment there is only one, but the intent

is to add the ability to create new channels.

Developing for NAO

One of the best parts of the day was having the chance to grill Jerome about

developing for NAO and he graciously answered all our questions.

Jerome advised us to start with small simple applications in order to get used

to the environment without also having try and create complex behaviour at the

same time. As an example he demonstrated the “touch my head” application. See

the video below.

There are several ways to create applications for NAO

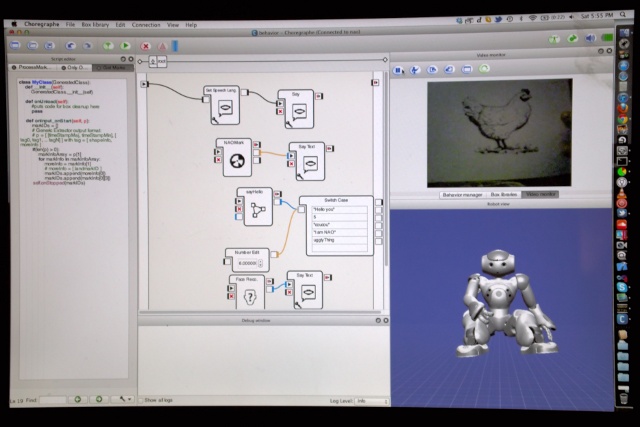

- Graphically using Choreographe which provides a graphical view of component behaviours which can be “wired” together (and which works in a similar way to the Lego Mindstorms development environment) combined with a timeline view with key frames(similar to the way the original flash editor works)

- Python - Python code can either run independently or integrated with Choreographe. Python and Choreographe are tightly integrated so that new behaviours can be implemented in Python and represented as “boxes” which can be wired together graphically in Choreographe

- Native code (C++)

- URBIscript

Having not had much time to play with Choreographe apart from having tried

some simple experiments I was surprised by the depth and power that Jerome

demonstrated. All boxes shown in Choreographe have a script, flow diagram and

timeline (not visible by default). Each timeline can have multiple keyframes

with each keyframe having its own flow diagram. For example you might use:

- one frame to initialize configuration data

- one to download functions

- another to perform the action

The script associated with a box is a Python class with methods corresponding

to events linked to the box (eg receiving input). You can define as many

inputs and outputs on a box as required and each will have a corresponding

method or property in the Python script. The onload method, called when the

box is loaded, provides the ability to create objects for use by the rest of

the behaviour.

Having assumed that “real” applications would need to be developed in native

code or python I now feel that a better approach may be to develop modular re-

usable components and wire them together using Choreographe.

I’ve spent most of the last ten years writing either in Java, javascript & C++

and having a strong interest in other

JVM languages, I was a

little disappointed that there was not better integration with the JVM but I

can understand Aldebaran Robotics’s reasoning - Python has always been more

popular in the academic community than Java and I imagine that most NAOs

outside Aldebaran are currently located in academia. Python is a powerful and

relatively fast language and although I don’t really like the way indentation

is used to indicate structure I imagine I’ll be spending a lot more time

writing in Python from now on.

That said, Jerome said that SOAP is used for communication between remote

components and so it’s perfectly feasible to write code on a remote computer

that interacts with NAO. Version 1.12 of the NAO software (currently in beta)

contains a Java binding that allows any module to be invoked from Java -

however since NAO does not run a Java virtual machine use of Java is limited

to software on a remote machine. It’s still a great feature to have though and

I’m sure I’ll be making use of this once I feel ready to create projects too

large to run only on NAO.

The NAO runtime is divided into modules implementing the ALmodule interface.

Module code is not invoked directly - instead a proxy object is created and

this is used to perform operations using a module. NAOqi contains a broker

which is just a bag of modules and provides a way to locate a module by name.

Operations on proxies are synchronous by default however it is possible to

call methods asynchronously: If myproxy.foo() invokes the synchronous

operation “foo” on myproxy then myproxy.post.foo() will execute it

asynchronously and return an ID that allows the asynchronous operation to be

referenced (for example to test whether it has finished).

NAOqi contains a fine-grained resource management system. It’s possible to

lock resources for a behaviour and the runtime will prevent other scripts from

using the resource until it’s unlocked. A nice feature is that, since

resources are organised hierarchically, it’s possible with a single lock to

lock the whole robot, a whole limb or a single motor.

NAO includes a version of OpenCV with

Python bindings which can be used for, among other things image recognition.

There is some support for generating training data built into Choreographe.

Below is a photo of the screen when Jerome was demonstrating how to create a

training set using Choreographe.

Random tips

Throughout the day I learned a lot from listening to Jerome (twitter: ), Carl

(twitter: @CC64)l & Tim (twitter:

@mechomaniac). Here’s a selection of

various useful bits of information:

When saving Choreographe projects same them as a directory, not a .crg file

since it makes it easier to commit the porject to git and allows all parts of

the project to have there changes to be tracked independently.

It’s possible to use ssh to connect to the Linux instance running on NAO.

Executing ssh nao@nao.local (default password nao) from a terminal window

should result in opening a session on NAO from which you can invoke Linux

commands an start an interactive Python session allowing you to interactively

experiment directly on NAO. If starting python in this way you’ll need to

manually import the modules you need (eg import opencv).

There is a logging module (ALLogger) that allows python code to write to a log

in order to help post-mortem debugging. ALLogger can be used either by

creating a proxy to it like any other modules or by using the self.log

reference (with methods debug, info, warn, error) in any Choreographe script.

here is a preference server running on Aldebaran Robotics’s website from which

NAO can download user depdendent configuration information for an application.

The communication takes place over an XMPP transport and is in XML format

(SOAP?).

NAO marks - these are cards that NAO can recognise. They are all numbered

allowing applications to perform actions based on what card is recnogised.

There is a PDF on the Choreoraphe CD and more can be downloaded (in PDF) from

the Aldebaran website. You can then print these and use the NAOmark box in

Choreographe to detect them.

The Python bindings are the most convenient way to use OpenCV. The

getMethodHelp(“”) function gives interactive help on the named OpenCV method.

Summary

A geek event does not get much better that this (IMHO) - a combination of very

knowledgeable (and gracious) people, robots, beer and food! Not only did I

learn a lot about NAO but I cam away inspired to learn more and develop my own

applications. I’m very grateful to Jerome and Akim for giving up their own

time to spend a day with us. Jerome and Akim demonstrated a sincere enthusiasm

and desire to help the NAO developer community and if there is any justice in

the world Aldebaran Robotics and NAO deserves massive success. I think the

only question mark regarding NAO is how to bring the platform to a wider

audience and I believe the availability of killer applications will play a

part in this - for this Aldebaran needs a vibrant developer community and

seems to be doing exactly the right things in this regard.

About Aldebaran Robotics & the NAO developer program

You can find find out more about Aldebaran Robotics via their website www.aldebaran-robotics.com and the developer program at developer.aldebaran-

robotics.com. The

blog is also a good source of

information on what NAO developers are doing. You can also follow the NAO

developer program on Twitter as

@NAOdeveloper and on

facebook.